Video / Slides / Code

Overview of Tensorflow.js and the Edge (0:00)

We’re talking about Tensorflow.js, and then a little bit about the Edge. I did a talk about Tensorflow.js back in June with Chris Fregly, when it had just come out. Mostly I talked about how Tensorflow.js worked, but I didn’t really do any demos or show off any code. I decided to challenge myself to prove I know how this stuff works. We’ll discuss whether I got that down — that’s my goal with this presentation.

My big point I was trying to make last time was that JavaScript may not be the cool language for machine learning. It’s more like the skater punk kid of programming languages, but I think you need to have it on your radar because JavaScript is a force to be reckoned with.

We’ll look at some of the stuff that’s happened since June. Then I’ll show you my partially working Tensorflow.js and Cloudflare demo.

This picture is a humorous description of the languages.

Most people who are good at science tend to like Python or R.

A lot of people use Java for production heavy stuff.

I like the image of JavaScript because oftentimes I feel like JavaScript is this Frankenstein monster of things combined.

Then you have Haskell which is like the purity of language.

BF gets a shout out because with enough semicolons, a functional programmer can move the world. Everybody gets a shout out here.

The Different Machine Learning Levels (2:40)

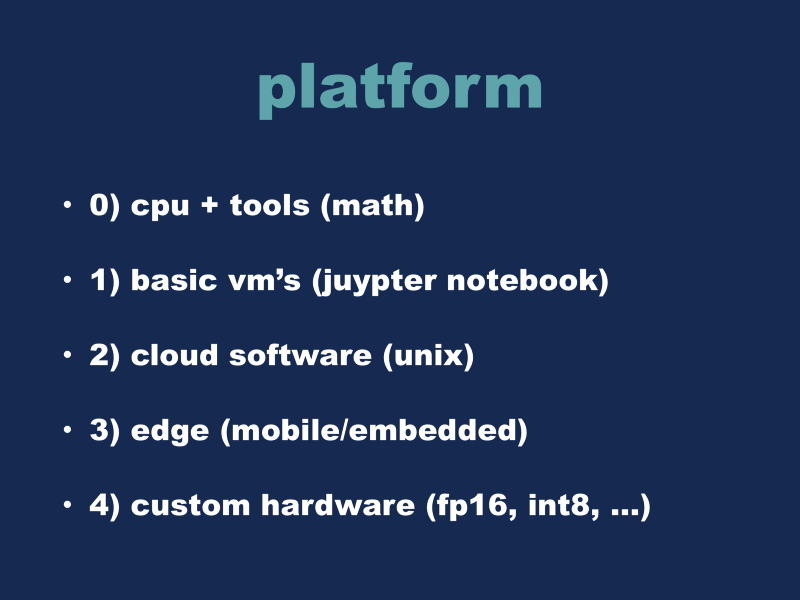

The slide above is very broadly how I think about the different levels when approaching these problems.

At the highest level, you can think of machine learning as being algorithms and math at a very high level.

In the real world, you’ll probably have to use a sort of virtual machine, in order to get your performance. That’s where you get into Jupyter Notebooks. The next level from that is when you’re orchestrating full blown cloud systems, with Unix in some form or variant. Then we come to the Edge. I am a mobile programmer, it’s what I’ve been doing for the last better part of a decade now. Mobile, iOS, and Android devices are my bread and butter. I think anything can become an embedded environment — take a laptop, put it in front of a car, and you potentially have a self-driving car, right? I think this is harder because with the cloud, you can often throw resources at a problem. Whereas if you were working on a mobile phone, you have very hard resource and memory constraints.

Then there’s custom hardware. This is what a lot of hardware companies are doing right now. For more about this, checkout my Tensorflow and Swift talk. But for now, we’re going to talk about level 3, the Edge.

Edge — Mobile/Embedded (4:20)

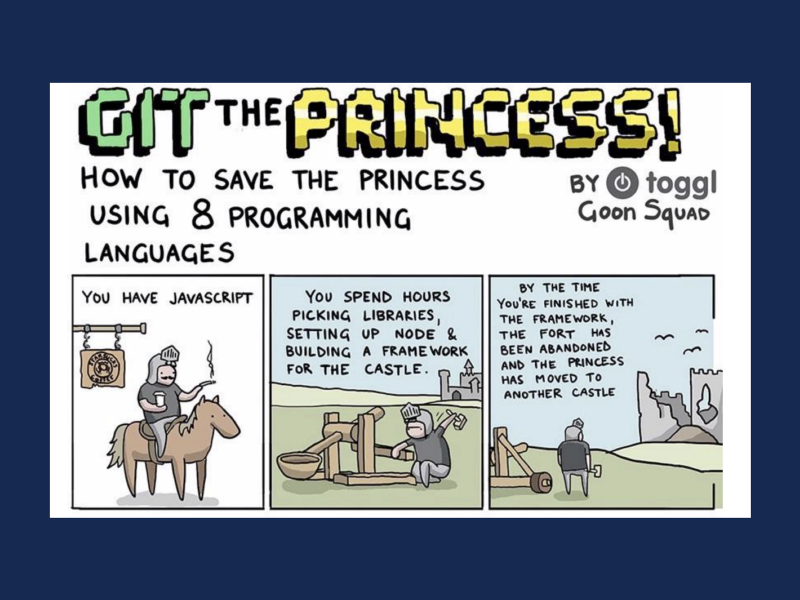

This comic describes how to save the princess using 8 different programming languages. This is the one for JavaScript, and here it says you spend hours building the framework for the “castle,” but by the time you’re finished, the “fort” has been abandoned and the princess was moved to another castle. Packages become obsolete, and often, that’s how it works.

All these other companies have plans and multi year goals, but in the end, it just comes down to random Brownian motion with Javascript. Every three months, the JavaScript universe changes. Sometimes it goes forwards or backwards. We can say Apple is maybe 2 or 3 generations ahead, but I think JavaScript is going to catch up eventually.

Five Easy Demos (6:05)

Here are some demos I found on the Internet that I thought were cool.

storage.googleapis.com/tfjs-examples/mnist/dist/index.html (6:20)

This one downloads the MNIST dataset and runs it in real time in the browser, showing off the loss/and training curves. We get to the end here and it shows all its predictions. The handwritten 8 looks like a 5 so it guessed wrong, so you can’t get too angry at the categorization algorithm. It’s doing its best.

Modeldepot.github.io/tfjs-yolo-tiny-demo/ (7:02)

This one is a cool demo, but it mostly only works in Chrome. This is like a webcam, it’s running the YOLO object recognition network. You can see it’s tracking me.

Magenta.tensorflow.org/js-announce (7:42)

This is Magenta, it’s like a Google-audio arts project. It plays music beats when you select the different buttons.

Poloclub.github.io/ganlab (8:25)

This is a demo of how GANs work. It visualizes how the algorithm learns the probability distribution. I personally can’t conceptualize how GANs work, so it’s cool for me to see how it works in the real world.

Blog.mgechev.com/2018/10/20/transfer-learning-tensorflow-js-data-augmentation-mobile-net/ (9:12)

This one I saw last week, I thought it was cool. I won’t actually demo it, but they took mobile nets and re-trained it to recognize gestures. So, you input some webcam motions and actions, and you can virtually kick and punch in a fighting game.

How Astronet Works (9:55)

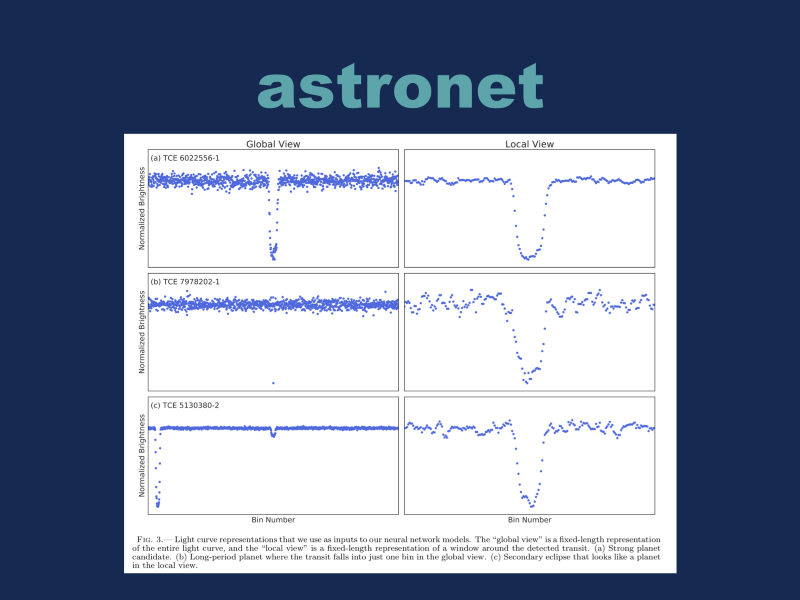

This is a paper from February called Astronet. It’s more like traditional TensorFlow, as opposed to Tensorflow.js, but I thought this was cool. The top two columns show what a light curve from an ideal star would look like. We have a dip over here, and that’s basically a planet passing in front of the sun. It’s detecting the change in magnitude as it’s passing through. The second column is showing just the specific information for the light curve (the small gap), if that makes sense.

The second row is what the real-world planet observation would more than likely look like. It’s literally one little dot right here. That’s the only actual sample that the cameras picked up from the star. So basically, they’re triangulating and finding the noise from it, they can go back and gather more data points — they were watching the star, but they only barely caught the planet passing over the star field. Then you start gathering more samples and you detect that there is a planet there. Then you update your gravitational models of the stars. Then you start finding these secondary signals, other planets around that star — you find one, and from that you find another. That’s how they find new planetary systems. You can check it out and see how it works at https://github.com/google-research/exoplanet-ml.

Mobilenets and TensorFlow.js Demo (13:17)

This is the demo I wanted to do. I’ll explain what happened, it didn’t quite get there. I wanted to take Tensorflow.js and use Mobilenets model, it’s a very small scale, about 17 mb for all the data, that makes it easy to shoot down the wire like on the phone. Then using a cloud worker server, and Cloudflare as the proxy worker, and finally you get a server deployed.

The first part of this was actually easiest. I went on the internet and found James Thomas, who is a mobile advocate for IBM, he has written his whole Mobilenets JavaScript Tensorflow.js program right here. You should go read his blog posts. But you can go through here, and it outputs the results. He walks through all the tricks. This is what the actual Mobilenets TensorFlow code looks like. It’s a little bit larger than the screen, but it’s pretty understandable.

jamesthom.as/blog/2018/08/13/serverless-machine-learning-with-tensorflow-dot-js/ (14:30)

So, I have the Cloudflare routes strings working. In the body, we have the JavaScript worker. This is like the config file, then the package file by itself. This is what your actual JavaScript file looks like. This is what I had, and so we’ll make a change…

We’ll run our server and deploy it… it packages everything up and ships it off to the server. It’ll take a couple of seconds for it to work. There you have it. The new worker is running on Cloudflare. They have 150 server locations or so. If you have users around the world, they’ll get optimal performance.

I attempted to get this workflow working with Cloudflare directly, but the process was peculiar. This implementation is tied to the docker runtime which is a custom thing that IBM ships, it’s part of their OpenWhisk implementation. One of their platform tricks for moving into a different system, you must rebuild that whole ecosystem. I started messing with it, but it’s not working right now. I had a bunch of help from a guy named Sean Graham, so I’d like to throw a shout out to him. I’ll jazz it up and push it out in the next week or so here, but the tensorflow.js part works.